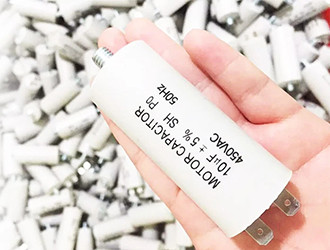

Dingfeng Capacitor---What does the Voltage Rating on a Capacitor Mean?

- Share

- Issue Time

- Apr 12,2019

Summary

The voltage rating on a capacitor is the maximum amount of voltage that a capacitor can safely be exposed to and can store.

What does the voltage Rating on a capacitor Mean

The voltage rating on a capacitor is the maximum amount of voltage that a capacitor can safely be exposed to and can store.

Remember that capacitors are storage devices. The main thing you need to know about capacitors is that they store X charge at X voltage; meaning, they hold a certain size charge (1µF, 100µF, 1000µF, etc.) at a certain voltage (10V, 25V, 50V, etc.). So when choosing a capacitor you just need to know what size charge you want and at which voltage.

Keep in mind that a good rule for choosing the voltage ratings for capacitors is not to choose the exact voltage rating that the power supply will supply it. It is normally recommended to give a good amount of room when choosing the voltage rating of a capacitor. Meaning, if you want a capacitor to hold 25 volts, don't choose exactly a 25 volt-rated capacitor. Leave some room for a safety margin just in case the power supply voltage ever increased due to any reasons. If you measured the voltage of a 9V battery supply, you would notice that it reads above 9 volts when it's new and has full life. If you used an exact 9-volt rated capacitor, it would be exposed to a higher voltage than the maximum specified voltage (the voltage rating). Usually, in a case such as this, it shouldn't be a problem, but nevertheless, it's a good safety margin and engineering practice to do this. You can't really go wrong choosing a higher voltage-rated capacitor than the voltage that the power supply will supply it, but you can definitely go wrong choosing a lower voltage-rated capacitor than the voltage that it will be exposed to. If you charge up a capacitor with a lower voltage rating than the voltage that the power supply will supply it, you risk the chance of the capacitor exploding and becoming defective and unusable. So don't expose a capacitor to a higher voltage than its voltage rating. The voltage rating is the maximum voltage that a capacitor is meant to be exposed to and can store. Some say a good engineering practice is to choose a capacitor that has double the voltage rating than the power supply voltage you will use to charge it. So if a capacitor is going to be exposed to 25 volts, to be on the safe side, it's best to use a 50 volt-rated capacitor.

Welcome to contact us

Please Click: http://www.dfcapacitor.com/

E-mail/Skype: info@dfcapacitor.com

Tel/WhatsApp: +86 15057271708

Wechat: 13857647932

Skype: Mojinxin124